Initial Deployment - Day 0¶

AVD Lab Guide Overview¶

The AVD Lab Guide is a follow-along set of instructions to deploy a dual data center L2LS fabric design. The data model overview and details can be found here. In the following steps, we will explore updating the data models to add services, ports, and WAN links to our fabrics and test traffic between sites.

In this example, the ATD lab is used to create the L2LS Dual Data Center topology below. The IP Network cloud (orange area) is pre-provisioned and is comprised of the border and core nodes in the ATD topology. Our focus will be creating the L2LS AVD data models to build and deploy configurations for Site 1 and Site 2 (blue areas) and connect them to the IP Network.

Host Addresses¶

| Host | IP Address |

|---|---|

| s1-host1 | 10.10.10.100 |

| s1-host2 | 10.20.20.100 |

| s2-host1 | 10.30.30.100 |

| s2-host2 | 10.40.40.100 |

Step 1 - Prepare Lab Environment¶

Access the ATD Lab¶

Connect to your ATD Lab and start the Programmability IDE. Next, create a new Terminal.

Fork and Clone branch to ATD Lab¶

An ATD Dual Data Center L2LS data model is posted on GitHub.

- Fork this repository to your own GitHub account.

- Next, clone your forked repo to your ATD lab instance.

Configure your global Git settings.

Update AVD¶

AVD has been pre-installed in your lab environment. However, it may be on an older version (in some cases a newer version). The following steps will update AVD and modules to the valid versions for the lab.

pip3 config set global.break-system-packages true

pip3 config set global.disable-pip-version-check true

pip3 install -r requirements.txt

ansible-galaxy collection install -r requirements.yml

Important

You must run these commands when you start your lab or a new shell (terminal).

Change To Lab Working Directory¶

Now that AVD is updated, lets move into the appropriate directory so we can access the files necessary for this L2LS Lab!

Setup Lab Password Environment Variable¶

Each lab comes with a unique password. We set an environment variable called LABPASSPHRASE with the following command. The variable is later used to generate local user passwords and connect to our switches to push configs.

export LABPASSPHRASE=`cat /home/coder/.config/code-server/config.yaml| grep "password:" | awk '{print $2}'`

You can view the password is set. This is the same password displayed when you click the link to access your lab.

IMPORTANT

You must run this step when you start your lab or a new shell (terminal).

Prepare WAN IP Network and Test Hosts¶

The last step in preparing your lab is to push pre-defined configurations to the WAN IP Network (cloud) and the four hosts used to test traffic. The spines from each site will connect to the WAN IP Network with P2P links. The hosts (two per site) have port-channels to the leaf pairs and are pre-configured with an IP address and route to reach the other hosts.

Run the following to push the configs.

Step 2 - Build and Deploy Dual Data Center L2LS Network¶

This section will review and update the existing L2LS data model. We will add features to enable VLANs, SVIs, connected endpoints, and P2P links to the WAN IP Network. After the lab, you will have enabled an L2LS dual data center network through automation with AVD. YAML data models and Ansible playbooks will be used to generate EOS CLI configurations and deploy them to each site. We will start by focusing on building out Site 1 and then repeat similar steps for Site 2. Finally, we will enable connectivity to the WAN IP Network to allow traffic to pass between sites.

Summary of Steps¶

- Build and Deploy

Site 1 - Build and Deploy

Site 2 - Connect sites to WAN IP Network

- Verify routing

- Test traffic

Step 3 - Site 1¶

Build and Deploy Initial Fabric¶

The initial fabric data model key/value pairs have been pre-populated in the following group_vars files in the sites/site_1/group_vars/ directory.

- SITE1_FABRIC_PORTS.yml

- SITE1_FABRIC_SERVICES.yml

- SITE1_FABRIC.yml

- SITE1_LEAFS.yml

- SITE1_SPINES.yml

Review these files to understand how they relate to the topology above.

At this point, we can build and deploy our initial configurations to the topology.

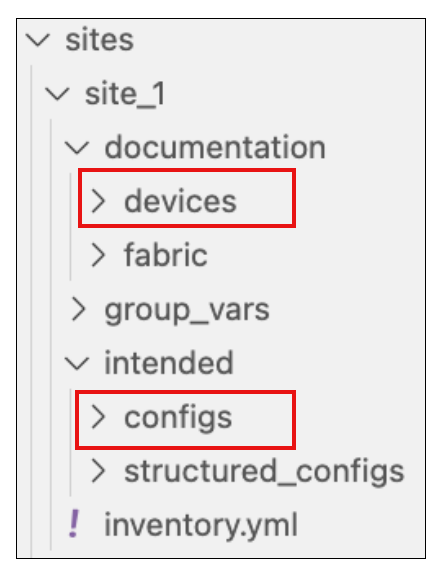

AVD creates a separate markdown and EOS configuration file per switch. In addition, you can review the files in the documentation and intended folders per site.

Now, deploy the configurations to Site 1 switches.

Login to your switches to verify the current configs (show run) match the ones created in intended/configs folder.

You can also check the current state for MLAG, VLANs, interfaces, and port-channels.

The basic fabric with MLAG peers and port-channels between leaf and spines are now created. Next up, we will add VLAN and SVI services to the fabric.

Add Services to the Fabric¶

The next step is to add Vlans and SVIs to the fabric. The services data model file SITE1_FABRIC_SERVICES.yml is pre-populated with Vlans and SVIs 10 and 20 in the default VRF.

Open SITE1_FABRIC_SERVICES.yml and uncomment lines 1-28, then run the build & deploy process again.

Tip

Log into s1-spine1 and s1-spine2 and verify the SVIs 10 and 20 exist.

It should look similar to the following:

Address

Interface IP Address Status Protocol MTU Owner

----------------- --------------------- ------------ -------------- ----------- -------

Loopback0 10.1.252.1/32 up up 65535

Management0 192.168.0.10/24 up up 1500

Vlan10 10.10.10.2/24 up up 1500

Vlan20 10.20.20.2/24 up up 1500

Vlan4093 10.1.254.0/31 up up 1500

Vlan4094 10.1.253.0/31 up up 1500

You can verify the recent configuration session was created.

Info

When the configuration is applied via a configuration session, EOS will create a "checkpoint" of the configuration. This checkpoint is a snapshot of the device's running configuration as it was prior to the configuration session being committed.

List the recent checkpoints.

View the contents of the latest checkpoint file.

See the difference between the running config and the latest checkpoint file.

Tip

This will show the differences between the current device configuration

and the configuration before we did our make deploy command.

Add Ports for Hosts¶

Let's configure port-channels to our hosts (s1-host1 and s1-host2).

Open SITE1_FABRIC_PORTS.yml and uncomment lines 17-45, then run the build & deploy process again.

At this point, hosts should be able to ping each other across the fabric.

From s1-host1, run a ping to s1-host2.

PING 10.20.20.100 (10.20.20.100) 72(100) bytes of data.

80 bytes from 10.20.20.100: icmp_seq=1 ttl=63 time=30.2 ms

80 bytes from 10.20.20.100: icmp_seq=2 ttl=63 time=29.5 ms

80 bytes from 10.20.20.100: icmp_seq=3 ttl=63 time=28.8 ms

80 bytes from 10.20.20.100: icmp_seq=4 ttl=63 time=24.8 ms

80 bytes from 10.20.20.100: icmp_seq=5 ttl=63 time=26.2 ms

Site 1 fabric is now complete.

Step 4 - Site 2¶

Repeat the previous three steps for Site 2.

- Add Services

- Add Ports

- Build and Deploy Configs

- Verify ping traffic between hosts

s2-host1ands2-host2

At this point, you should be able to ping between hosts within a site but not between sites. For this, we need to build connectivity to the WAN IP Network. This is covered in the next section.

Step 5 - Connect Sites to WAN IP Network¶

The WAN IP Network is defined by the core_interfaces data model. Full data model documentation is located here.

The data model defines P2P links (/31s) on the spines with a stanza per link. See details in the graphic below. Each spine has two links to the WAN IP Network configured on ports Ethernet7 and Ethernet8. OSPF is added to these links as well.

Add P2P Links to WAN IP Network for Site 1 and 2¶

Add each site's core_interfaces dictionary (shown below) to the bottom of the following files SITE1_FABRIC.yml and SITE2_FABRIC.yml

Site #1¶

Add the following code block to the bottom of sites/site_1/group_vars/SITE1_FABRIC.yml.

##################################################################

# WAN/Core Edge Links

##################################################################

core_interfaces:

p2p_links:

- ip: [ 10.0.0.29/31, 10.0.0.28/31 ]

nodes: [ s1-spine1, WANCORE ]

interfaces: [ Ethernet7, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.33/31, 10.0.0.32/31 ]

nodes: [ s1-spine1, WANCORE ]

interfaces: [ Ethernet8, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.31/31, 10.0.0.30/31 ]

nodes: [ s1-spine2, WANCORE ]

interfaces: [ Ethernet7, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.35/31, 10.0.0.34/31 ]

nodes: [ s1-spine2, WANCORE ]

interfaces: [ Ethernet8, Ethernet2 ]

include_in_underlay_protocol: true

Site #2¶

Add the following code block to the bottom of sites/site_2/group_vars/SITE2_FABRIC.yml.

##################################################################

# WAN/Core Edge Links

##################################################################

core_interfaces:

p2p_links:

- ip: [ 10.0.0.37/31, 10.0.0.36/31 ]

nodes: [ s2-spine1, WANCORE ]

interfaces: [ Ethernet7, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.41/31, 10.0.0.40/31 ]

nodes: [ s2-spine1, WANCORE ]

interfaces: [ Ethernet8, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.39/31, 10.0.0.38/31 ]

nodes: [ s2-spine2, WANCORE ]

interfaces: [ Ethernet7, Ethernet2 ]

include_in_underlay_protocol: true

- ip: [ 10.0.0.43/31, 10.0.0.42/31 ]

nodes: [ s2-spine2, WANCORE ]

interfaces: [ Ethernet8, Ethernet2 ]

include_in_underlay_protocol: true

Build and Deploy WAN IP Network connectivity¶

Tip

Daisy chaining "Makesies" is a great way to run a series of tasks with a single CLI command

Check Routes on Spine Nodes¶

From the spines, verify that they can see routes to the following networks where the hosts reside.

- 10.10.10.0/24

- 10.20.20.0/24

- 10.30.30.0/24

- 10.40.40.0/24

Test Traffic Between Sites¶

From s1-host1 ping both s2-host1 & s2-host2.

Great Success!

You have built a multi-site L2LS network without touching the CLI on a single switch!